Lisa: Although, this course content is not necessarily as glamorous as some of the other topics I have gone over, it is something of which I am very passionate. How do we take research and actually integrate it into practice? I would say over the course of the past 10 to 15 years, there has been a big push to get research into practice, but it takes a good chunk of time for research to hit the practice setting. How can we as clinicians, administrators, or researchers collectively work together to effectively turn research into practice on a regular basis to provide the best care for our patients? This will be the gist of what we talk about today. I am going to review a few terms just to make sure that we are all on the same page today to set a good foundation before we hit the ground running. We need to all use similar terminology, and after we do that, I want to present to you some resources that are out there. These resources may help you find research that you could integrate into practice. We also need to talk about how to get different stakeholders on-board with integrating research into practice. When I say stakeholders, I mean people like yourselves (as practitioners), as well as your managers, team leaders, and the patients and families. Again, it is a complex process to get research into practice. I like to use the example of when I worked full-time in inpatient rehab at Ohio State's hospital. I remember how much effort it took to take one day off work. You have to get your OT team lead's approval, your rehab team's approval, and then you have to make sure that your patients are scheduled and covered for the next day. Imagine trying to take an evidence-based intervention, and the complexities that go with that intervention, and getting that approved and worked into your practice setting.

Evidenced-Based Practice

What is evidence-based practice?

This quote came from the book Evidence-Based Medicine by Dr. Sackett back in 1996. We, as clinicians, are taking the best research that is out there and combining that with our clinical expertise in order to make client-centered intervention applicable to the patients that we serve. It is an interplay between what we know as experienced clinicians. I do not care if you have only three months of experience; it is still experience and that is still valuable. It is taking the experience we have as clinicians and merging it with what we know is out there.

What constitutes best evidence?

- Meta-analyses

- Systematic reviews

- Critically appraised topics

- Randomized controlled trials

There is a lot of research out there, everything ranging from top tier meta-analysis research all the way down to something that is not even listed on here, case study research.There is a big hierarchy of research, but best evidence is really comprised of these four types of research listed above.

Meta-Analyses

A meta-analysis is where many researchers do a glorified literature review on a topic. Let's use the example of effective fall prevention programs for older adults. The researchers would find as many good articles they could on this topic and look at the findings and statistics. Then based on those statistics, these researchers would run their own set of statistical analyses on those numbers to determine how powerful these fall prevention programs were.

Systematic Reviews

A small step below that are systematic reviews. Systematic reviews are also glorified literature reviews, and I say glorified because typically you cannot just review something. You need at least two people; it could be yourself plus at least one other person. There is a pre-structured set of criteria and a question that you want answered. What programs are effective for preventing falls in older adults? Then, you have certain check boxes that each article has to hit. There are your inclusion and exclusion criteria. Once you find all your articles that you feel address the topic that you are interested in (fall prevention), then you sum up the results of those articles. The results should give us an idea as to what programs are effective for preventing falls and what programs are not.

Critically Appraised Topics

A step down from that are critically appraised topics. Many students will do a critically appraised topic. They search the research that is available about a topic. You can get away maybe with summarizing one research article, but typically you want to include more than one research article in this type of review. I have also heard of critically appraised topics referred to as critically appraised papers. They are valuable, but they are not quite as rigorous as the systematic reviews or meta-analyses.

Randomized Control Trials

Lastly, in terms of best evidence, you have individual randomized control trials. These are your typical research experiments. For example, one group of older adults are involved in a fall prevention program (experimental group). There is also a control group, or group of older adults who are just receiving, for example, balance related exercises. At the end of that research study, you are then comparing their outcomes to determine if this fall prevention program was really in fact effective.

Those are your four best types of evidence. There are many examples of systematic reviews in adult rehab like repetitive task practice after-stroke, constraint induced movement therapy, home modifications for older adults to prevent falls, and comparing how aerobic and resistive exercise can decrease pain in adults with rheumatoid arthritis. There are many across different populations and different settings. The evidence is out there. Years ago, the evidence was not available, and we would just go with our gut as to what interventions were best for patients. Now that we have the evidence, we should use it.

Overview of Implementation Science

Why is this evidence not getting used all the time? I have been guilty of it myself, and I say hindsight is always 20/20. When I was a new clinician, could I have delivered better care and done a better job by staying abreast of the literature? I am sure I could have, but there can be barriers. Integrating research into practice can be complex, and there is a whole field related to this study of how to get research into practice called implementation science. This definition was written back in 2006 by two individuals that are not occupational therapists.

“The scientific study of methods to promote the systematic uptake of research findings and other evidence-based practices into routine practice…to improve the quality and effectiveness of health services and care” (Eccles & Mittman, 2006, para 2).

The implementation of evidence into practice is not just an OT issue; it is an allied health issue, an issue in medicine, an issue in public health, an issue in nursing, etc. Researchers in this field are studying how to move research into practice which I think is cool, and it is about time. We have many anecdotal experiences as to what effectively gets research into practice, but now there is a field entirely devoted to the research behind integrating research into practice. Outcomes that they typically look at are going to be things like the following. What does it take for a facility to adopt a new intervention or a new program? Is that intervention going to be acceptable? Do the therapists accept using it? Do patients accept receiving it? Is it feasible based on the structure of your organization, your facility, or your management? Can clinicians implement this intervention with fidelity? Do therapists have the skills and the resources to be able to take an intervention, an evidence-based practice, and deliver it to therapists the way that it is intended? Are there costs associated with these interventions? If so, do the costs outweigh the benefits, or vice-versa? Then lastly, another outcome that implementation scientists will look at is the sustainability of an intervention. Even though we are encouraging this particular intervention to get used, like functional ESTIM, is it sustainable or can it stand the test of time?

Related Terminology

- Knowledge translation

- Research-to-practice gap

- Practice-based evidence

- Evidence-supported interventions

- Evidence-informed practices

- Knowledge diffusion

- Implementation research

- Research utilization

Implementation science itself might seem like a new term. The most familiar term, when I first started this journey, was the term knowledge translation. Knowledge translation is more commonly used. When I attended the Dissemination and Implementation Conference, put on by a group called Academy Health (the National Institutes if Health sponsor this), not once did I hear anybody from the United States refer to this issue of getting research into practice as knowledge translation. The people that did refer to that issue as knowledge translation were people from Canada and Europe. Our Canadian and European colleagues are, in my opinion, far ahead of where we need to be in terms of researching how to get research into practice. Another term that I think you may be familiar with is "research-to-practice gap." Practice-based evidence is the opposite of where we are looking. This is going into a rehab facility and looking at what therapists are already doing. We are determining what works based on what the therapists are doing with their patients, as opposed to taking research that works and using it with patients. There are other references for your review as well.

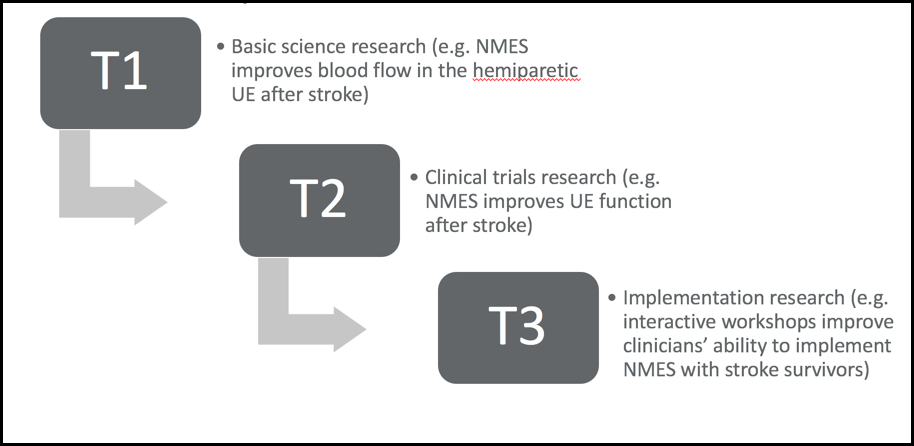

Translational science, implementation science or whatever you want to call it, can be broken down into three blocks.

Figure 1. Translational continuum.

I think there are actually four (blocks) now, but for the sake of simplicity, we are only going to focus on the three. In this translational continuum example, the T1(translational) block is basic science research. As an example, there are researchers working in a lab looking at the effects of neuromuscular electrical stimulation (NMES) on improving blood flow after-stroke in the hemiparetic upper extremity. We need blood to flow so that is important science, but as a clinician, this means nothing as it is outside the scope of my practice. However, at the T2 block, this is research we are most familiar with as clinicians. It is clinical trial research where they are looking at NMES and how it affects upper extremity function after a stroke. There is a good amount of evidence that says that NMES is effective for improving outcomes in the upper extremity post-stroke. In the T3 block, we know functional NMES is good. Now, how do we get clinicians, facilities, and administrators on board with integrating functional NMES into practice? This is where the implementation science comes into play. Your implementation researchers are going to create something like an interactive workshop to teach therapists about how to use NMES. The researchers will compare the outcomes of the interactive workshop to the outcomes of therapists that only received a standardized protocol. This is the research that looks at what types of strategies, techniques, workshops, protocols, or educational meeting sessions empower clinicians to have the skills and resources that they need in order to implement research into practice.

Development of EBPs

You have your four types of best evidence that influence what protocols are best practice. Again, those four types are meta-analyses, systematic reviews, critically appraised topics or papers, and randomized control trials. The first three are literature reviews. Here are some examples of what are considered to be best practices in the OT world.

- Repetitive task practice

- Constraint-induced movement therapy

- Mental practice

- Aerobic and resistive exercise for arthritis (pain)

- A Matter of Balance (falls)

Implementation of EBPs

We know "best practices" are out there, but getting them into practice is easier said than done. I feel like I am preaching to the choir. I do hope some administrators and managers attend this course in addition to clinicians. To integrate research into practice requires a lot of folks on board. If we have all of this evidence, why is it not getting into practice? There are many reasons. Here is an interesting statistic. It takes 17 years for 14% of research to get integrated into practice. We are talking about a small chunk of research that gets used in practice 17 years after it is discovered. You can see how that is a problem. People have researched the reasons why that is happening.

Barriers

- Time

- Resources (staff, funding, equipment)

- Lack of training

- Difficulty locating research

- Difficulty interpreting research

- Lack of support

- Personal perceptions of interventions

- Organizational factors

Time is a big barrier as are resources. An example might be that you do not have enough staff to implement the new protocol or not enough funding to purchase equipment. There also may be a lack of staff that fully understand this type of new intervention that you want to implement. As evidence changes, skill sets need to change as well, but in order to do that, you have to be provided with the tools. There may be difficulty locating good research. Then once you do find it, how do you interpret the results?

Facilitators

- Access to online resources

- Interactive workshops

- Site “champions”

- Managerial support

- Positive experiences with EBP

- Manualized protocols

On the flip side, there has been research to show that there are ways to overcome these barriers. For instance, you have access to research via online resources. We will spend a good chunk of the rest of this talk talking about what those online resources are. Interactive workshops are great as well. You many have gone to those CEU courses where you get hands-on training and have a back and forth dialogue with somebody that is considered to be an expert in that certain intervention. Site champions or site mentors are effective for helping other clinicians who might not be as familiar with an intervention. They can help clinicians use that intervention regularly with their patients. Standardized manuals and protocols can be effective for helping therapists to have a cookbook on how to use certain interventions effectively. Support, from not only your colleagues, but also your managers and administrators, has been found to be an effective way of incorporating evidence into practice.